The right way to measure AI executive assistant ROI in the first 30 days is to treat month one as a finance-and-measurement exercise, not as a generic "pilot went well" narrative. A serious buyer should be able to show the baseline economics of the workflow, the observed benefit, the observed cost, and the remaining uncertainty. That does not mean month one must show fully annualized ROI. It means the economics should be transparent enough that a buyer, procurement lead, or CFO can tell whether the pilot is generating credible proof of value or just optimistic language. McKinsey continues to show that AI adoption does not automatically equal scaled value, and OpenAI reports that the strongest gains come from structured, repeatable workflows rather than casual experimentation.

This article is intentionally about measurement and buyer reporting. If you need the operational guide for how to run the pilot itself, use How to Run a 30-Day AI Executive Assistant Pilot. If you want the broader annual model after month one, see the ROI calculator for AI executive assistants.

In the first 30 days, ROI is best treated as measured proof of value, not as a heroic annual savings claim.

At this stage, the finance questions are:

- What was the manual cost baseline for the workflows in scope?

- What benefits were actually observed during the pilot?

- What new costs were introduced by software, implementation, and review overhead?

- Is the pilot net-positive, break-even, or still below the line?

- If the accounting ROI is weak, is the underlying signal still strong enough to justify a controlled second phase?

That framing matters because quick wins still need controls. Microsoft's 2025 Work Trend Index describes pressure to redesign work around human-agent teams, while NIST's Generative AI Profile and the OECD's workplace AI guidance reinforce that value should be measured alongside oversight, accountability, and clearly bounded use.

Before calculating ROI, build the manual baseline for the exact workflows in scope.

For each workflow, capture:

| Baseline field | What to record |

|---|

| Manual time per task | Average minutes spent today |

| Monthly volume | How many times the task occurs in a typical month |

| Fully loaded labor rate | Hourly cost for the EA, chief of staff, executive reviewer, or delegate involved |

| Current failure or delay cost | Rework, missed follow-up, backlog, or scheduling churn caused by the manual process |

Use a simple baseline table like this:

| Workflow | Manual minutes per task | Monthly volume | Primary owner today | Loaded hourly rate | Monthly manual labor cost |

|---|

| Morning brief | 15 | 20 | EA | $55 | (15/60) x 20 x 55 = $275 |

| Recurring meeting prep | 20 | 16 | EA / chief of staff | $55 | (20/60) x 16 x 55 = $293 |

| Scheduling proposals | 10 | 32 | EA | $55 | (10/60) x 32 x 55 = $293 |

| Follow-up drafting | 12 | 20 | EA | $55 | (12/60) x 20 x 55 = $220 |

The baseline formula is straightforward:

monthly manual labor cost = (manual minutes per task / 60) x monthly volume x loaded hourly rate

If more than one person touches the workflow, split the baseline by role. That matters because executive review minutes are more expensive than EA minutes, and hidden reviewer cost can erase apparent savings.

Most weak month-one ROI decks make one mistake: they put all observed minutes on the benefit side and forget to price the new work introduced by the system.

Keep the model clean:

| Benefit side | Cost side |

|---|

| Manual labor avoided | Software or pilot fee |

| Reduced rework or coordination churn | Implementation and setup time |

| Faster turnaround on recurring workflows | Reviewer approval and correction time |

| Better same-day follow-through on bounded work | Security, legal, or IT review time tied to the pilot |

| Lower backlog on repetitive coordination tasks | Training and change-management time |

This split is more useful for procurement and finance because it shows whether value is operationally real or only looks good when overhead is ignored.

In the first 30 days, use formulas that buyers can audit quickly.

gross labor value = hours avoided x loaded hourly rate

Where:

hours avoided = ((baseline minutes per task - post-AI minutes per task) x monthly volume) / 60

net labor value = gross labor value - reviewer overhead cost - correction cost

Where:

reviewer overhead cost = reviewer hours x reviewer loaded hourly ratecorrection cost = correction hours x correcting role loaded hourly rate

This is the most important month-one formula because raw automation numbers are misleading if the human now spends too long editing, validating, or rerouting the result.

If the pilot reduces missed follow-ups, scheduling churn, or duplicate prep work, calculate that separately:

rework reduction value = rework hours avoided x loaded hourly rate

Keep this line conservative. Only count rework that was actually observed or logged during the pilot.

total quantified benefit = net labor value + rework reduction value + any other directly observed, priced benefit

If a benefit cannot be measured credibly in month one, describe it qualitatively instead of pricing it aggressively.

Now calculate what the pilot actually cost.

| Cost category | Month-one formula |

|---|

| Software cost | monthly license or pilot fee |

| Implementation cost | setup hours x loaded hourly rate of internal team or vendor cost |

| Reviewer overhead | review hours x reviewer loaded hourly rate |

| Correction cost | rewrite/correction hours x correcting role loaded hourly rate |

| Security / legal / IT cost | governance hours attributable to the pilot x loaded hourly rate |

| Training cost | training hours x participant loaded hourly rate |

Then calculate:

total month-one cost = software cost + implementation cost + reviewer overhead + correction cost + governance cost + training cost

This is the line item serious buyers often undercount. In month one, implementation and reviewer overhead can be material. That does not invalidate the pilot. It just means the finance story should be honest.

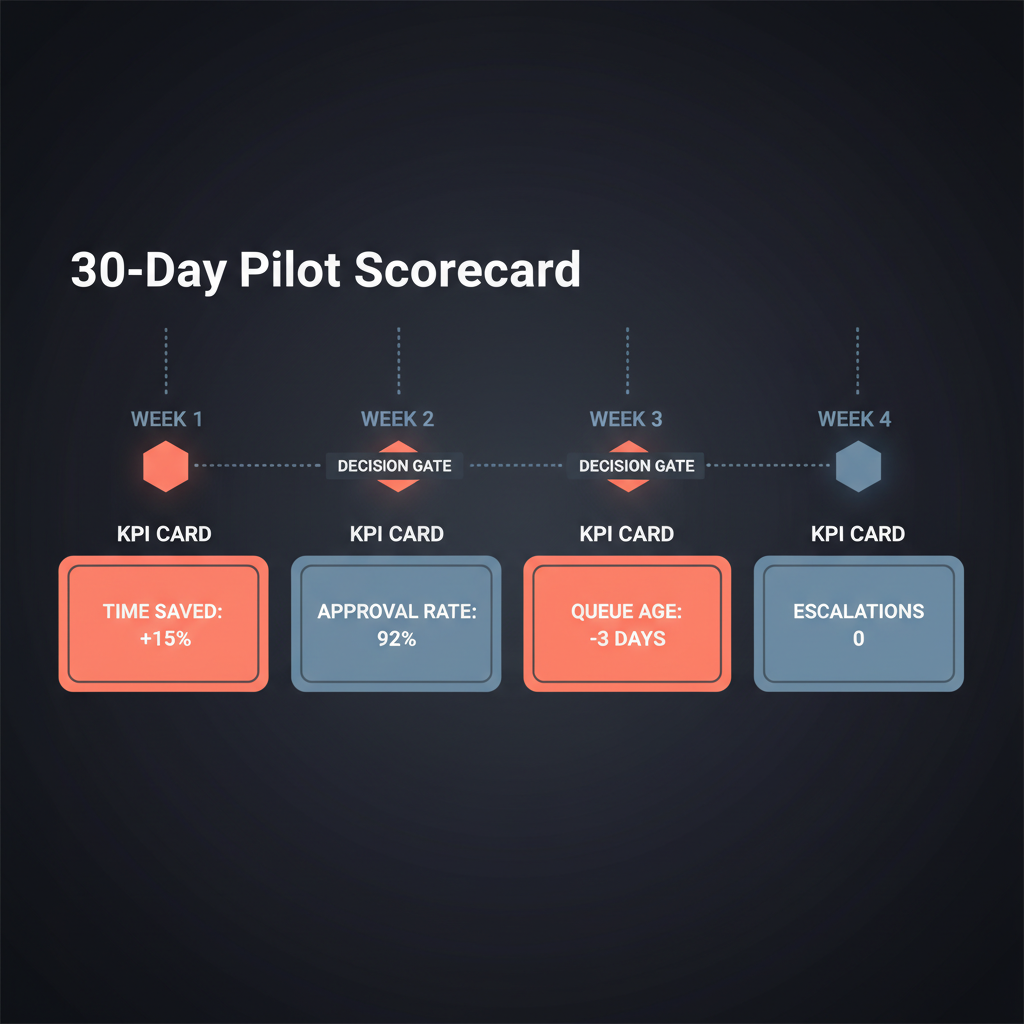

Once benefit and cost are separated, the scorecard becomes much cleaner.

| Metric | Formula | Why buyers care |

|---|

| Net minutes saved | baseline minutes - post-AI minutes - review/correction minutes | Shows whether the workflow is actually lighter |

| Net labor value | net minutes saved / 60 x loaded hourly rate | Converts time into priced value |

| Month-one ROI % | ((total quantified benefit - total month-one cost) / total month-one cost) x 100 | Shows whether the pilot is already above the line |

| Payback multiple | total quantified benefit / total month-one cost | Useful when buyers prefer a simple cost-cover ratio |

| Approval-with-light-edits rate | approved as-is or lightly edited outputs / total reviewed outputs | Indicates whether the system is creating usable work |

| Rewrite rate | heavily rewritten outputs / total reviewed outputs | Flags hidden labor drag |

| Escalation accuracy | correctly escalated sensitive items / total sensitive items observed | Measures governance quality alongside savings |

A simple month-one interpretation model:

| Scorecard outcome | Interpretation |

|---|

| Positive ROI and healthy quality metrics | Strong proof of value; scale is easier to justify |

| Negative ROI but improving quality and low rewrite burden | Common in month one if setup costs are front-loaded; worth a controlled second phase |

| Positive gross savings but high rewrite or governance problems | False positive; the workflow may be financially weak once hidden labor is counted |

| Negative ROI and weak quality metrics | Weak proof; buyers should narrow scope, renegotiate, or stop |

Different stakeholders need different versions of the same evidence.

Lead with:

- which workflows were measured

- net time or labor value by workflow

- whether outputs were genuinely usable

- whether the office wants to keep the workflow

Lead with:

- total month-one cost

- which costs are one-time versus recurring

- what evidence supports expansion, renegotiation, or stop

- whether the pilot reduced or increased internal review burden

Lead with:

- baseline labor economics

- quantified benefit versus quantified cost

- whether month-one costs are front-loaded

- what assumptions would have to hold for broader ROI to be credible

A useful reporting template is:

| Reporting line | Example |

|---|

| Scope measured | Four recurring executive workflows across one office |

| Baseline monthly labor cost | $1,081 |

| Observed total quantified benefit | $920 |

| Observed total month-one cost | $1,250 |

| Month-one ROI | ($920 - $1,250) / $1,250 = -26.4% |

| Interpretation | Negative accounting ROI in month one due to setup and review overhead, but quality and queue metrics support a short second phase |

This style of reporting is more credible than a vague claim that "the pilot saved hours." It gives finance the math and gives the sponsor the operating signal.

Month-one ROI is often messy for good reasons.

Three common cases:

This often happens when setup costs and reviewer learning are front-loaded. If quality is improving, rewrite burden is falling, and the workflow is stable, the correct conclusion may be "not yet above the line, but economically promising."

This is the classic false win. The draft arrives faster, but the EA or executive still spends too long fixing it. In that case, the workflow is not yet net-positive no matter how attractive the raw automation number looks.

This is normal. One or two workflows may carry the economics while another remains too noisy. Buyers should keep the winning lanes and stop pretending every use case belongs in the business case.

Serious month-one interpretation is therefore narrower than "did we save money?" The better question is: did we produce credible economic signal under real operating conditions?

By day 30, the most credible executive summary often sounds like this:

We measured four executive workflows against a manual baseline. Two workflows are already net-positive after review cost, one is near break-even, and one remains below the line because correction time is still too high. Total month-one ROI is slightly negative because setup and reviewer time were front-loaded, but the quality and control metrics support a short second phase focused on the winning lanes.

That is a better buying narrative than "AI is transformative" or "the pilot saved dozens of hours." It is specific, priced, and decision-ready.

Do not rely on a month-one ROI model if:

- the workflows are too low-frequency to produce meaningful data inside 30 days

- the main benefit is strategic judgment or relationship handling, which is harder to price quickly

- there is no reviewer capacity, which will distort the economics

- the organization has already decided to buy regardless of the evidence

- the actual question is long-term org redesign rather than bounded workflow value

This is also why month-one ROI should not be used as proof that an AI assistant can replace a human EA, chief of staff, or operator wholesale. A 30-day model can prove workflow economics. It cannot settle the entire support-model question.

Usually no. Month one should first establish whether the workflow is real, repeatable, and economically credible. Annualization is more defensible only after the behavior stabilizes.

For most buyers, it is net labor value after review and correction cost. That is the fastest way to separate apparent savings from real savings.

Yes. If setup costs are front-loaded and the quality metrics are improving, a slightly negative month-one ROI can still represent credible proof of value for a second phase. What matters is whether the economics are trending toward a sustainable model.

Procurement should challenge unpriced reviewer effort, vague implementation cost, and any benefit line that was not actually observed during the pilot.

To measure ROI for an AI executive assistant in the first 30 days, build the manual baseline, separate benefit from cost, use transparent formulas, and report the results in a way that finance can audit. Month-one ROI is not about heroic annual claims. It is about showing whether the workflow is economically credible once software cost, implementation effort, review overhead, and correction time are all counted.

That is the standard serious buyers should use: price the workflow honestly, then decide whether the signal is strong enough to scale.

Alyna is an AI Chief of Staff built for draft-first executive work: brief, triage, coordinate, and queue actions for approval before anything consequential moves. Get access.