For most COOs, the best use of an AI Chief of Staff is not "productivity" in the generic founder sense. It is tighter operating cadence. The job is to help the COO enter the weekly review with the right pre-read, surface blockers before they become escalations, and turn cross-functional decisions into tracked follow-through instead of more meetings. That matters because the real operating problem inside many companies is not lack of strategy. It is coordination drag. Atlassian's 2025 State of Teams says teams waste 25% of their time searching for answers, Asana's 2025 meeting research shows meetings remain the default coordination tool even when they do not move work forward, and McKinsey notes that many executives see their organizations as overly complex and inefficient. For COOs, an AI Chief of Staff is valuable when it reduces that drag and preserves control.

If you want the broader category definition first, start with the AI Chief of Staff overview. This page is narrower: it is about the COO operating rhythm.

The COO usually sits at the convergence point of the business:

- functional leaders bring issues upward

- strategic priorities must become weekly execution

- dependencies across finance, product, revenue, people, and operations need active management

- slippage often appears first as "small" follow-through failures

That operating reality is why a COO can be overwhelmed even in companies that are relatively disciplined. The overload is not just volume. It is fragmented context. Atlassian reports that knowledge workers and leaders waste a quarter of their time searching for answers, while Asana reports that 21% of employees say teams do not collaborate effectively across the organization and that executives now lose more time to unproductive meetings than they did a few years ago.

An AI Chief of Staff is useful here because it can do the preparation layer continuously:

- consolidate status inputs across channels

- build a decision-ready weekly review brief

- flag owners, due dates, and stalled dependencies

- draft follow-ups and escalation notes

- maintain one visible queue for what still needs a decision

That is a much better fit for COO work than a vague promise to "make you more productive."

For a COO, the role is best understood as an operating cadence layer. In practice, that means it should connect naturally to the surrounding executive workflows Alyna already supports, such as daily briefs for executives and calendar conflict resolution, because those routines feed directly into weekly operating control.

The system should help with five things:

| COO need | What the AI Chief of Staff should do |

|---|

| Review preparation | Assemble a weekly brief with KPIs, misses, changes since last review, and unresolved issues |

| Blocker detection | Surface stalled approvals, handoff failures, dependency conflicts, and repeated slippage |

| Escalation routing | Draft the right escalation path with owner, issue summary, impact, and required decision |

| Follow-through management | Turn meeting decisions into assignments, deadlines, reminders, and update prompts |

| Cross-functional visibility | Keep finance, product, sales, operations, and people issues visible in one control surface |

This is much closer to the real-world chief-of-staff function than to a generic AI chatbot. It mirrors the practical responsibilities of a chief of staff: briefing, coordination, routing, and review. The Center for Presidential Transition's chief-of-staff description is a useful public analogue because it emphasizes briefing, schedule management, document review, and coordination across support functions.

For Alyna specifically, this fits the operating model on the AI Chief of Staff page: the system briefs, drafts, and tracks while the executive keeps approval over consequential action.

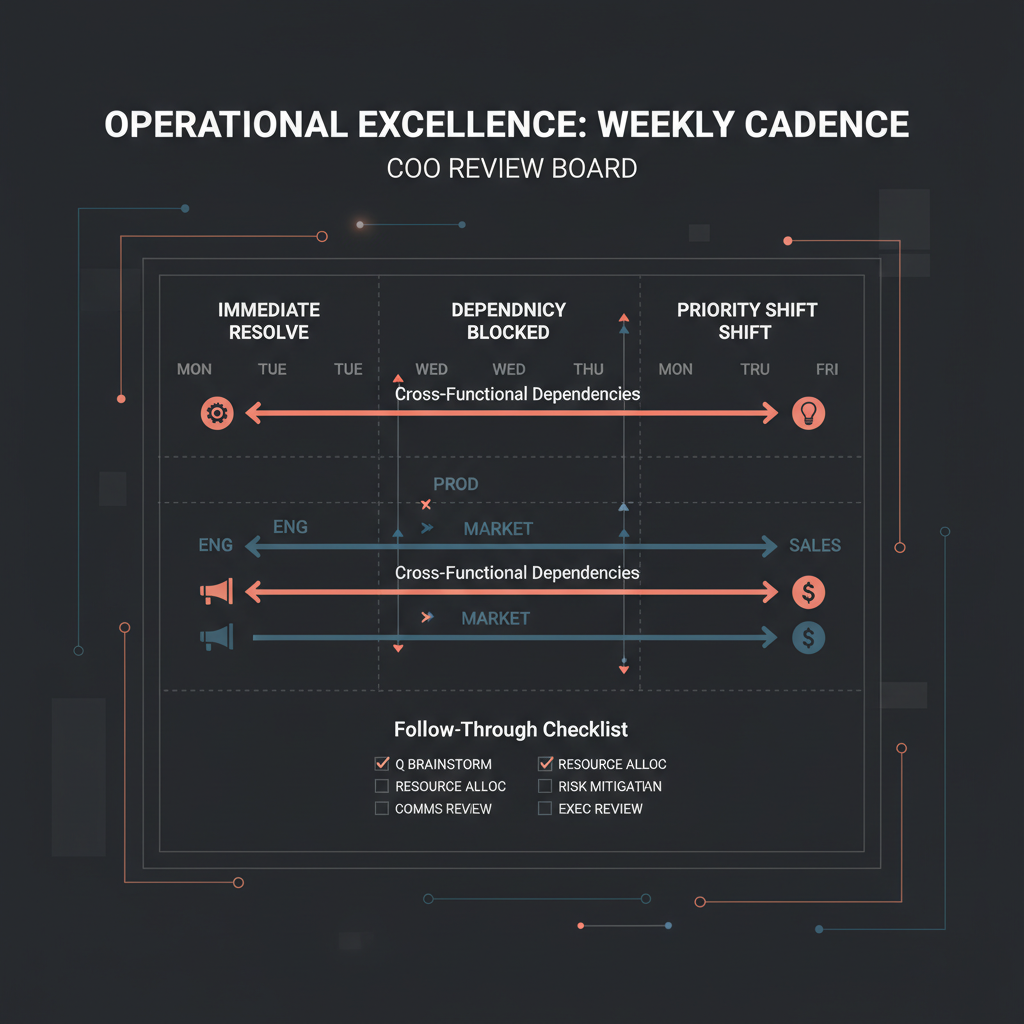

The strongest use case is a repeatable weekly operating rhythm.

Instead of letting every function bring updates in a different format, use a standard cadence:

| Cadence point | What happens | What the AI Chief of Staff prepares |

|---|

| Monday pre-read | COO gets a concise operating picture before the week starts | KPI deltas, open risks, aging blockers, key meetings, and decisions due this week |

| Midweek blocker check | Critical dependencies are reviewed before they snowball | Escalation queue grouped by owner, severity, and time sensitivity |

| Weekly operating review | Functional leaders align on what is off-track and what needs help | One brief with metrics, root-cause notes, and recommended discussion order |

| Post-review follow-through | Decisions turn into actions with owners and dates | Draft action log, reminders, and stakeholder follow-up messages |

| Friday closeout | COO sees what moved, what slipped, and what rolls forward | Completed items, misses, unresolved decisions, and next-week watchlist |

This is the practical value proposition: fewer status-harvesting meetings, more decision-ready reviews.

Bain's guidance on business performance reviews is useful here. Bain argues that review forums work best when targets are tied clearly to strategy, agendas focus on what is off-track, prep is kept lean, and consequences follow from the review. That maps directly to a COO workflow: the AI should not produce a longer status packet. It should produce a leaner, sharper one.

Many weekly reviews are too backward-looking. They become readouts instead of execution forums.

A better structure is:

| Review segment | Primary question | What good looks like |

|---|

| 1. What changed since last week? | What is materially different? | Only new information, not recycled status |

| 2. What is off-track? | Which KPIs, milestones, or dependencies slipped? | Variance is visible with owner and reason attached |

| 3. What is blocked? | What cannot move without intervention? | True blockers separated from ordinary delay |

| 4. What decision is required? | What needs COO judgment now? | Each decision framed with options and trade-offs |

| 5. What must happen next? | What are the commitments before the next review? | Named owner, due date, and follow-up path recorded |

That framework matters because poor operating reviews often fail in predictable ways: too much prep, unclear ownership, too much time spent re-explaining the past, and too little discipline after the meeting. Bain explicitly warns against bloated pre-reads and backward-looking review sessions. The AI Chief of Staff should be reducing those failure modes, not digitizing them.

If the weekly brief is the visible use case, blocker escalation is the economic one.

Cross-functional execution usually slows down for a small set of recurring reasons:

- a dependency has no clear owner

- one function is waiting on another but nobody has named the decision

- a metric slipped but the root cause is still ambiguous

- the issue is politically sensitive, so teams avoid escalation until late

- there is disagreement about whether the problem is tactical, structural, or resource-related

This is why generic status reporting is not enough. The COO needs a system that can distinguish between:

- noise: updates that do not need intervention

- attention items: issues that need monitoring

- true blockers: issues that require a decision, a resource shift, or escalation

Use a simple escalation ladder:

| Issue type | Example | Escalation rule | Typical owner |

|---|

| Watch | Milestone slipped by a day, no downstream impact yet | Keep in brief, no escalation yet | Functional lead |

| Assist | Dependency is at risk and cross-team help is needed | Route to staff owner or operations manager | Program owner |

| Escalate | Delay affects another team, customer promise, budget, or launch date | Add to COO queue before next review | Functional exec or PMO lead |

| Intervene now | Material risk to revenue, compliance, people, or external commitments | Immediate alert with draft decision memo | COO / CEO / designated exec |

That ladder gives the AI something useful to do. Instead of summarizing every update equally, it can classify issues, surface the real bottlenecks, and draft a short escalation memo with:

- issue summary

- owner

- dependency involved

- downstream risk

- decision needed

- recommended next step

That is exactly the kind of structure that makes a COO meeting shorter and sharper.

A weekly review is only useful if the decisions survive the meeting.

This is where many COO operating systems break:

- the meeting ends without a single action log

- owners are implied rather than named

- deadlines are verbal rather than recorded

- follow-ups happen in scattered channels

- the same blocker returns next week with a slightly different explanation

An AI Chief of Staff should act as the memory and routing layer for these moments. That means:

- extracting decisions from the meeting

- converting them into an action register

- prompting for missing owner or due date fields

- drafting the follow-up notes across stakeholders

- resurfacing overdue items before the next review

OpenAI's enterprise AI report is helpful context here because it points to the highest value coming from structured workflows that reduce the gap between intent and execution. That is exactly what COO follow-through is: closing the distance between a decision made in a room and an outcome delivered in the business.

If you are designing this workflow, keep it operationally strict.

| Layer | What belongs there | Why it matters |

|---|

| Weekly brief | KPIs, misses, blockers, pending decisions | Gives the COO one pre-read instead of fragmented updates |

| Decision log | Decision taken, rationale, approver, date | Prevents re-litigation and ambiguity |

| Action log | Owner, due date, next checkpoint, dependency | Makes follow-through measurable |

| Escalation queue | Items needing intervention, resource shift, or executive decision | Keeps the COO focused on real bottlenecks |

| Closeout summary | Completed items, slipped items, unresolved dependencies | Creates a clean handoff to the next week |

The most effective setup usually starts with a narrow scope, not a company-wide coordination overhaul. That is why the adoption guidance in first 90 days with an AI executive assistant and how to roll out an AI executive assistant to your team still applies here: start with a small set of high-frequency workflows, define the approval rules, and only then widen the footprint.

There is a temptation to imagine a COO-facing system that autonomously resolves every blocker, sends every follow-up, or reprioritizes the business on its own. That is usually the wrong design.

The safer and more useful boundary is:

- AI may summarize, classify, draft, remind, and propose

- humans should approve consequential external messages, org changes, and sensitive escalations

- high-stakes people, legal, finance, and customer-commitment issues should always stay under explicit oversight

That boundary is not just conservative taste. NIST and the OECD both reinforce the need for accountability, transparency, and human oversight when AI systems influence workplace decisions.

For a COO, the plain-English rule is simple: let the AI compress coordination work, but do not let it silently create commitments.

If the setup is working, the signs are operational rather than theatrical:

- the weekly brief is actually read

- reviews spend more time on decisions and less on status recitation

- blockers surface earlier in the week rather than inside the meeting

- follow-up notes are drafted the same day

- owners and deadlines are visible without chasing people across tools

- repeated misses are easier to identify because the pattern is documented

There is also a cultural benefit. Teams stop thinking of the COO review as a performance theater session and start treating it as a problem-solving forum. That matters because Bain notes that effective reviews should be constructive, focused on what is off-track, and linked to actual consequences and next steps.

An AI Chief of Staff for COOs is not always the right move.

It is a bad fit when:

- the company has no stable operating cadence yet, so the AI would only mirror chaos

- there is no agreed KPI set or no discipline around owners and due dates

- teams refuse to surface bad news honestly, which means the brief will be incomplete

- the COO is looking for strategic judgment or political mediation rather than coordination leverage

- the workflow depends on autonomous execution rather than approval-first drafting and routing

It is also a weak fit if the organization keeps changing tools, ownership structures, or planning formats every few weeks. In that environment, the AI spends too much time relearning the system instead of helping run it.

The best candidates are COOs who already run some version of a weekly business review and need the machine to make it tighter, leaner, and more reliable.

The emphasis is different. A generic executive assistant workflow usually centers on inbox, calendar, and personal coordination. A COO workflow centers on operating reviews, blockers, dependencies, and cross-functional action tracking.

Usually the weekly review pre-read. It is frequent, structured, and painful enough that even modest improvement is visible quickly.

Usually no for high-stakes items. It can draft and route them, but consequential escalation should normally remain approval-first so the COO controls framing and timing.

For most COOs, it is not "messages processed." It is whether blockers surface earlier, decisions are recorded more cleanly, and actions are completed with fewer follow-up meetings.

For COOs, an AI Chief of Staff is most valuable when it sharpens the operating cadence: one better weekly brief, one clearer blocker queue, one stronger follow-through system across functions. That is the real job. Not generic productivity. Not founder lifestyle optimization. Operational control.

If the system helps the COO see what changed, what is blocked, who owns the next move, and what still needs a decision, it is doing the work that matters. If it merely generates more summaries and more notifications, it is adding to the problem.

Alyna works as an AI Chief of Staff for executives who need draft-first coordination, daily and weekly briefs, and one approval surface for follow-through. Get access.