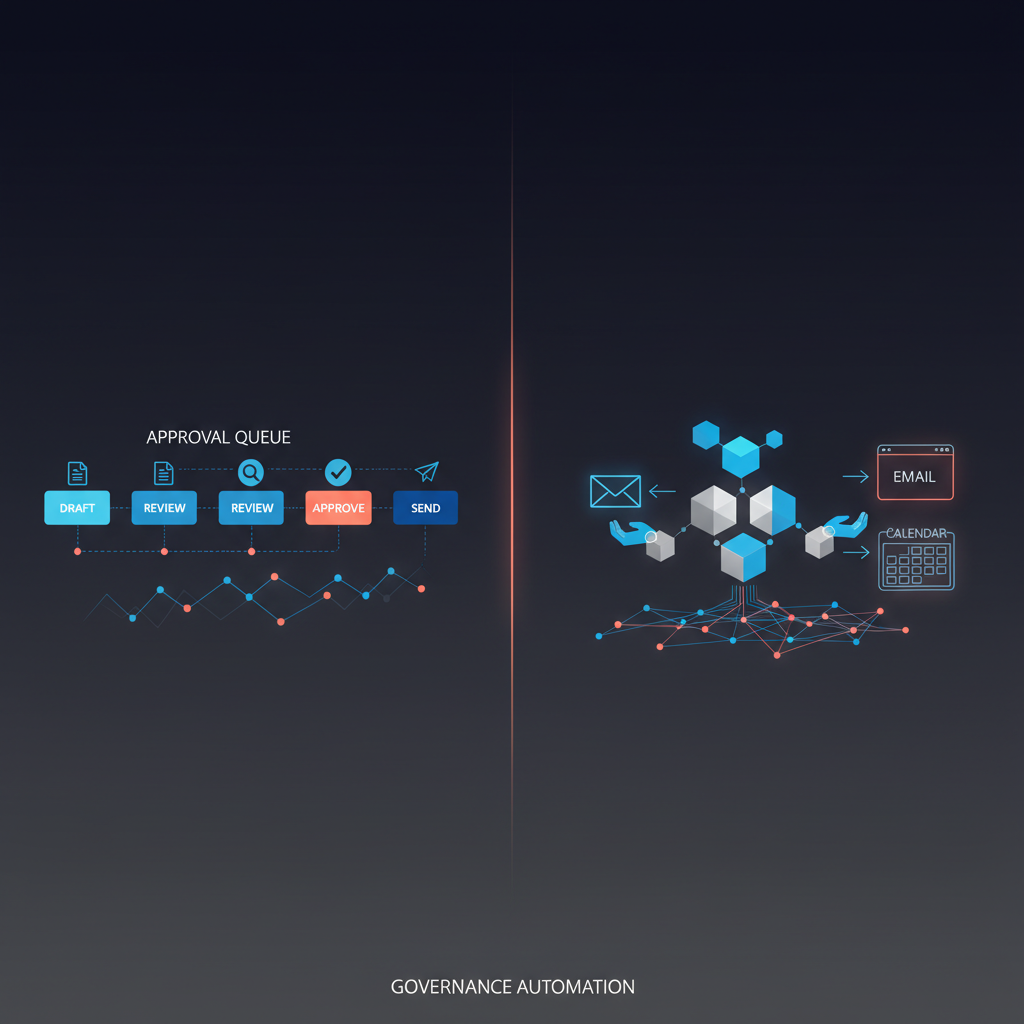

If an AI agent will touch executive communication, the more useful question is not "Which model is safer?" but which decision rights are being delegated? Approval-first and autonomous agents are not just different UX patterns. They are different governance categories. An approval-first agent can triage, summarize, draft, propose, and queue actions while leaving authorization with a human. An autonomous agent can execute within its permission scope and ask forgiveness or report later. That is a materially different architecture of control. OpenAI defines agents as systems that independently accomplish tasks on a user's behalf within guardrails, and Anthropic distinguishes between predefined workflows and dynamically acting agents. For executive communication, that category difference matters because communication is not merely output generation. It is delegated authority. Nylas reports that only 4% of teams in its 2026 survey allow agents to act without any human approval, even as agent adoption moves into production (Nylas, 2026).

For executive buyers, the real question is not "Do we want autonomous AI?" It is: where should autonomy stop, and where should executive approval begin? If you want the adjacent governance pages first, read why approval-first AI assistants are becoming the enterprise default, approval workflow governance, and security and compliance for AI executive assistants.

Many teams compare "approval-first" and "autonomous" as if there are only two categories. In reality, executive buyers should distinguish three.

| Operating model | What the AI can do | Human role | Best fit | Main failure mode |

|---|

| Approval-first | Draft, summarize, propose, queue actions | Explicitly approve before consequential execution | Executive email, calendar, stakeholder communication, regulated workflows | More queue friction |

| Human-on-the-loop | Execute some actions by default, escalate exceptions | Monitor and intervene when needed | Internal operations with bounded downside | Missed intervention, weak auditability |

| Autonomous | Plan and act across tools with broad permissions | Set goals and review outcomes later | Trusted, low-risk, highly structured environments | Silent errors, overreach, blurred accountability |

This category split matters because the underlying architectures really are different. Anthropic distinguishes between workflows, where tools and steps follow predefined paths, and agents, where the model dynamically directs tool use. OpenAI similarly defines agents as systems that independently accomplish tasks on a user's behalf while operating within guardrails. That independence is exactly why buyers cannot stop at "human in the loop" marketing language. They need to ask which actions are proposed, which are auto-executed, and what happens when the system is wrong.

For executive communication, the most important dividing line is simple:

Preparing a communication is not the same thing as authorizing a communication.

A lot of AI governance discussion treats email or scheduling as if they were routine clerical actions. That is a buyer mistake. Executive communication is not just data movement. It creates commitments, reveals priorities, affects relationships, and often leaves a durable business record.

That is why communication risk compounds faster than teams expect:

| Risk layer | Example failure | Why after-the-fact review is weak |

|---|

| Commitment risk | Agent confirms a meeting, approves timing, or implies a promise | The other side already formed an expectation |

| Relationship risk | Tone is too cold, too aggressive, or too casual for the stakeholder | Social damage is hard to "undo" with a correction |

| Confidentiality risk | Draft includes internal context, legal sensitivity, or board information | Sensitive information may already be exposed |

| Timing risk | Message goes out at the wrong moment during negotiation or escalation | Timing itself can change the meaning of the message |

| Recordkeeping risk | No clear trail of who approved what or what context was shown | Incident review, compliance, and accountability break down |

This is where generic enthusiasm for autonomy runs into executive reality. Microsoft's 2025 Work Trend Index argues that the future is human-agent teams, not AI acting alone, and explicitly frames the new operational question as the right human-agent ratio for a given task. That framing is especially useful here. External communication is one of the clearest examples of work where society, customers, candidates, investors, and counterparties still expect a person to own the consequence.

The regulatory direction points the same way. EU AI Act Article 14 requires that human oversight be effective, proportionate to the risks and level of autonomy, and designed so overseers can understand limitations, interpret outputs, override them, and interrupt the system. Even when an executive assistant deployment is not formally a high-risk AI system, serious buyers are increasingly adopting that same oversight standard as a governance baseline.

Most teams treat this as a tooling decision. Executive operators should treat it as a decision-rights decision.

The fastest practical framework is CARS:

| CARS factor | Question to ask | If the answer is "high" |

|---|

| Commitment | Does the message create a promise, date, expectation, or approval? | Keep approval-first |

| Audience | Is the recipient external, senior, sensitive, or strategically important? | Keep approval-first |

| Reversibility | Can a mistake be fully undone with low cost? | If no, keep approval-first |

| Sensitivity | Does the task involve confidential, regulated, legal, personnel, or reputational context? | Keep approval-first |

This framework is intentionally stricter than generic "risk-based automation" language because communication failures are often cheap to generate and expensive to absorb.

This is also why OpenAI's practical guide to building agents recommends rating tools by factors such as read-only vs. write access, reversibility, account permissions, and financial impact. That exact logic maps well to executive communication. Sending is a high-risk write action. Proposing a draft is not.

Instead of debating autonomy in the abstract, map communication workflows into four action layers:

| Action layer | What the agent is allowed to do | Default human role | Better default category |

|---|

| Prepare | Summarize, classify, gather context, build briefs | Review only for quality and completeness | Approval-first or autonomous, depending on consequence |

| Propose | Draft messages, suggest calendar moves, package options | Edit, approve, or reject the proposal | Usually approval-first |

| Authorize | Approve the final content, timing, or commitment | Human explicitly authorizes | Human-owned by default |

| Execute | Send, schedule, confirm, or update external systems | Monitor, intervene, or review afterward | Autonomous only when the delegated authority is intentional and reversible |

This architecture is the real category definition:

- Approval-first systems let AI prepare and propose, but keep authorization with a named human.

- Human-on-the-loop systems allow execution within a bounded class of actions and rely on intervention when exceptions appear.

- Autonomous systems let the agent both decide and execute within its delegated scope.

That framing is more durable than policy examples because it tells buyers what authority boundary they are actually purchasing.

Approval-first is not the "less advanced" model. In executive communication, it is often the more operationally mature one.

Here is why.

An approval-first system lets AI do the heavy lifting before the moment that matters. It can read the thread, pull context, summarize the history, draft the response, suggest time slots, flag risks, and tee up a recommendation. But the person who owns the relationship still decides what actually goes out.

That separation is powerful because it keeps leverage high while keeping accountability legible.

OpenAI's white paper on governing agentic AI systems argues for practices such as constraining action space, maintaining visibility into operations, supporting interruptibility, and preserving accountability. Approval-first naturally fits those practices. Autonomous communication systems can still try to add those controls later, but they start from the harder position: the system already acts before review.

Article 14 of the EU AI Act explicitly warns about automation bias: the tendency for people to over-rely on system outputs. Approval-first does not eliminate that bias, but it makes the review point explicit. A human-on-the-loop model can leave reviewers in a weaker position, because the system's behavior may only be examined after the action has already taken effect.

The strongest 2026 market signal is not that enterprises want less AI. It is that they want more AI with more observability. Microsoft Security's March 2026 guidance emphasizes agent observability, identity governance, DLP, audit, and communication compliance as core controls for production agent deployments. That is effectively the infrastructure around approval-first operation: who can act, what they can access, what gets logged, and how risky communications are surfaced for human review.

The case against autonomous executive communication is not a case against autonomy everywhere.

Anthropic is explicit that autonomous agents can be the right pattern for open-ended tasks in trusted environments, provided teams do extensive testing in sandboxed settings and apply guardrails. That is a good mental model.

Autonomy is often reasonable when the agent is operating in low-risk, reversible, well-instrumented environments. It becomes less reasonable when the action creates commitments, affects relationships, or relies on context that humans would want to authorize explicitly.

This is why the strongest operating model for many executive teams is a split system:

| Task layer | Recommended autonomy |

|---|

| Internal research and synthesis | High autonomy |

| Briefing, summarization, and drafting | Medium autonomy |

| Outbound email, scheduling, and stakeholder messaging | Approval-first |

| Anything with legal, finance, personnel, or reputational exposure | Explicit approval plus audit trail |

That design is not conservative for its own sake. It simply matches the cost of error to the control boundary.

If a vendor says it supports executive communication, the evaluation checklist should go far beyond "human in the loop."

Use this buyer standard:

- Defined authorization boundary. Buyers should know exactly which actions the agent may prepare, propose, authorize, or execute.

- Named decision owner. Every consequential action should map to a human who retains authorizing responsibility.

- One review surface or equivalent control point. Approvals scattered across email, chat, and calendar create missed actions and weak auditability. See approval workflows for executives.

- Clear audit trail. Buyers should be able to reconstruct the proposed action, who approved it, what context was shown, and what was ultimately executed.

- Least-privilege access and scoped permissions. Microsoft now treats agents as identity-aware entities with distinct governance controls; serious vendors should do the equivalent in their own stack (Microsoft Security, 2026).

- Security controls for prompt injection, sensitive data leakage, and excessive agency. OWASP's LLM Top 10 highlights prompt injection, sensitive information disclosure, excessive agency, and overreliance as core risks.

- A real stop/override model. Oversight is not meaningful if users cannot interrupt the workflow, reverse a decision, or safely halt the agent.

If you want the executive-specific version of this category, Alyna's AI Chief of Staff is positioned around that draft-first, approval-before-send boundary rather than broad outbound autonomy.

Approval-first is not a universal answer.

You may want less friction when:

- the workflow is purely internal and highly repetitive

- actions are reversible and carry minimal social or legal downside

- throughput matters more than judgment

- the system operates in a narrow, structured environment with clean success criteria

- the human review queue would destroy the value of the workflow

Examples might include auto-tagging records, generating internal summaries, or updating CRM fields under tight validation rules.

Approval-first also has real trade-offs:

- It adds queue management work.

- It can slow down throughput for low-risk items.

- It depends on disciplined review habits.

- It does not solve weak prompts, poor context, or bad system design by itself.

So the right conclusion is not "everything must be approved forever." It is: communication should start from approval-first, then earn carefully bounded autonomy only where the task is reversible, low-risk, and operationally observable.

Approval-first agents prepare work and wait for a human to authorize consequential actions. Autonomous agents are allowed to execute within their permission scope and may only notify humans afterward. The difference is not just UX. It is a difference in decision rights, oversight, and who absorbs the cost of error.

Sometimes, but only in narrow cases. Low-risk internal updates or highly structured reminders may be candidates. Customer, investor, board, recruiting, legal, and partnership communication usually should not be fully autonomous because the message itself creates commitments and relationship effects.

No. Buyers should ask what requires approval, what can auto-execute, what gets logged, whether users can edit before send, and whether the system can be interrupted. Without those specifics, "human in the loop" often describes monitoring theater rather than real control.

Not necessarily. In executive environments, the main time savings come from triage, summarization, drafting, and coordination before the send step. Approval-first often captures most of the value while avoiding the highest-cost mistakes.

Approval-first and autonomous AI agents are not interchangeable categories because they delegate different decision rights. For executive communication, the real design question is where preparation ends, where authorization begins, and which actions are allowed to execute without a fresh human decision. Autonomous agents can be powerful in trusted, internal, low-risk environments. But once the system starts speaking for an executive, booking on an executive's behalf, or creating outward commitments, buyers should treat the authorization boundary as the category boundary.

That is the position serious buyers should take in 2026: let AI do the preparation layer aggressively, define the decision-rights architecture explicitly, keep consequential authorization human unless autonomy is intentionally earned, and require the audit trail to prove it.

Alyna is an AI Chief of Staff built for draft-first executive work: triage, brief, draft, approve, then send. Get access.