Approval-first AI assistants are becoming the enterprise default because they solve the real market problem: how to get AI leverage without losing human accountability. This page is intentionally not another tactical explainer of approval queues. It is a category thesis about why the market is moving this way now. In practice, an approval-first assistant drafts, proposes, and queues actions, but it does not send emails, book meetings, or message stakeholders until a person explicitly approves the action. That design matters more in 2026 than it did two years ago because the constraints are no longer theoretical. Procurement teams want evidence. Legal teams want documented oversight. Operators want speed, but they also want to know that nothing customer-facing happens silently. If your AI touches inboxes, calendars, or external communication, the question is not "can it automate?" It is "can it automate in a way that a serious company can defend?"

The answer, increasingly, is approval-first. Enterprise AI adoption is accelerating, but value comes from structured workflows and repeatable processes rather than casual experimentation. OpenAI reported in late 2025 that usage of structured workflows such as Projects and Custom GPTs had increased 19x year to date, while 75% of surveyed workers said AI improved the speed or quality of their output and heavy users reported more than 10 hours saved per week. At the same time, adoption still breaks down when organizations do not redesign workflows and training around the tool. OpenAI's state of enterprise AI report and BCG's AI at Work 2025 survey point to the same conclusion: durable adoption depends on workflow design, leadership support, and trust. In that environment, approval-first is not just a product choice. It is becoming the procurement-friendly, change-management-friendly default for executive AI. If you want the adjacent operational pages, see approval workflow governance, AI executive assistant vendor due diligence, and first 90 days with an AI executive assistant.

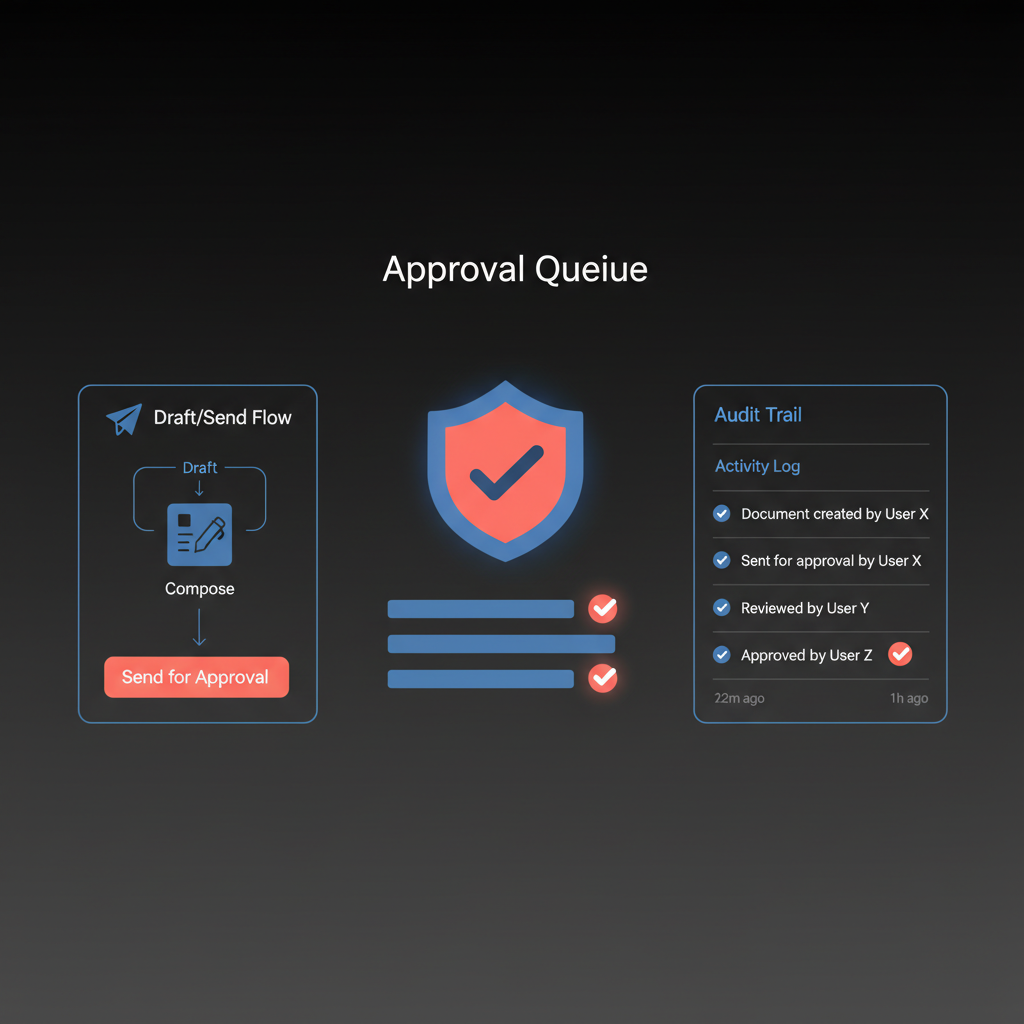

An approval-first AI assistant is an assistant that can prepare work without independently executing consequential actions. It can read context, summarize, draft, propose, and tee up the next step, but a human remains the final authorizer for anything that changes the outside world.

A concise definition the reader can quote:

Approval-first AI means the system can prepare the action, but a human must authorize the action before it is executed.

That is different from looser terms like "human in the loop," which are often used too broadly. In practice, there are at least three patterns:

| Oversight model | How it works | Best for | Main risk |

|---|

| Approval-first / human in the loop | AI proposes each consequential action and waits for approval | External communication, regulated workflows, executive support | More queue friction |

| Human on the loop | AI acts by default, human monitors and intervenes when needed | Internal operations, lower-risk automations | Missed interventions and weak auditability |

| Autonomous execution | AI acts within broad permissions and may only notify after the fact | Narrow, low-risk, highly structured tasks | Silent failure, reputational damage, weak accountability |

For executive assistants, that distinction is not academic. Email, calendar, travel, hiring coordination, investor communication, and customer-facing follow-ups all create records, expectations, and occasionally legal obligations. The more external the action, the stronger the case for explicit approval.

Teams are clearly interested in agentic AI, but they are not embracing unlimited autonomy. According to Nylas's 2026 State of Agentic AI research, 67% of developers and product leaders say their teams are already building or shipping agentic workflows, yet only 4% allow agents to act without any human approval. Most teams are using graduated trust models instead: low-risk actions may run automatically, while higher-risk actions still require review.

That is the real market signal. Buyers are not rejecting AI. They are rejecting blind execution.

BCG found that only one-third of employees say they have been properly trained on AI, and frontline adoption remains stuck around 51% even as managers and leaders use AI heavily. The biggest improvements come when organizations redesign workflows, train people, and make the rules of usage clear. Approval-first helps because it gives users an understandable mental model:

- The AI drafts.

- I review.

- The system logs the decision.

- Then it acts.

That is much easier to operationalize than vague promises about "autonomy with safeguards."

The EU AI Act's Article 14 guidance on human oversight makes the direction of travel obvious: when AI meaningfully affects people or business outcomes, systems need to enable effective oversight by natural persons. Even if a specific executive assistant use case does not end up formally classified as high-risk AI, enterprise buyers are already absorbing that mindset.

Approval-first aligns with the compliance story companies want to tell:

- A human understood the recommendation.

- A human approved the action.

- The system recorded the approval and outcome.

- The company can reconstruct what happened later.

That is a much stronger position than "the model was allowed to send unless someone stopped it."

Approval without audit trail is only half the answer. If you cannot show who approved what, when they approved it, what context they saw, and what the final action was, you still have a governance gap.

A useful audit trail for AI assistants should capture:

| Audit requirement | Why it matters |

|---|

| Proposed action | Shows exactly what the AI intended to do |

| Context shown to approver | Proves the human approved with enough information |

| Approver identity | Establishes accountability and access control |

| Timestamp | Supports incident review, compliance review, and chronology |

| Outcome | Confirms whether the action was approved, edited, or rejected |

| Final executed content | Prevents ambiguity about what actually went out |

This is where approval-first becomes more than a UX choice. It becomes an operating control.

Autonomous assistants are appealing because they promise maximum convenience. If the system can send, reschedule, and follow up without your intervention, the argument goes, then you save even more time.

But the hidden cost is that every autonomous workflow creates a higher burden somewhere else:

- Legal burden: someone still owns the outcome.

- Security burden: broad permissions create broader blast radius.

- Operational burden: someone has to review incidents after the fact.

- Adoption burden: users become hesitant to trust the tool with important workflows.

Nylas's research also shows that speed is the primary reason teams adopt agentic AI, but reliability, integration coverage, permissions, and observability matter more than flashy demos once those workflows get closer to production. That is exactly why approval-first is winning. It preserves speed on preparation while controlling execution risk.

If you are evaluating an AI executive assistant, use this shortlist before you get impressed by feature demos:

- Does every external action require explicit approval?

- Can the user edit before approving?

- Is there one review queue, or are approvals fragmented across channels?

- Does the system log proposal, approver, timestamp, and final outcome?

- Can the audit log be exported for internal review or compliance?

- Are approval rules configurable by channel or risk level?

- Can the tool explain why it proposed the action?

- "We only show approvals for risky actions" without a clear definition of risk.

- "The AI learns your style and sends automatically over time" for customer-facing channels.

- No exportable audit trail.

- No clear separation between suggested content and executed content.

- No explicit statement about how permissions are scoped.

Approval-first is not free. It adds friction. Someone still has to clear the queue. Some actions that could technically be automated remain gated by human review. For high-volume teams, the queue itself can become a design problem.

But that friction is often the point. It shifts the trade-off from "speed vs control" to "preparation speed with execution control." In many executive workflows, that is the correct optimization.

Another quote worth keeping:

The job of an executive assistant is not just to move fast. It is to move fast without creating avoidable risk.

Approval-first supports that standard better than broad autonomy does.

Approval-first is especially well suited to:

- executive email drafting and follow-up

- calendar coordination and meeting proposals

- travel planning and itinerary changes

- recruiting communication and candidate follow-up

- deal or client communication where tone and timing matter

- any workflow where auditability matters as much as speed

It is less important for purely internal, low-risk automations where the consequences of mistakes are minor and reversible.

Alyna is not competing to be the most autonomous assistant on the market. It is competing to be the most defensible and useful assistant for real executive work. That category wins when users can delegate drafting, triage, preparation, and coordination without wondering whether the system will act beyond their intent.

That is also a better long-term content angle. The category story should not be "AI can do everything." It should be "AI can handle the preparation layer so the executive can keep judgment, accountability, and trust."

- Approval-first AI means the assistant prepares the action, but a human must authorize the action before it executes.

- The model is winning because enterprise AI adoption now depends on trust, workflow design, and defensible oversight, not raw novelty.

- OpenAI, BCG, and Nylas all point to the same pattern: organizations want structured workflows, not uncontrolled autonomy.

- EU AI Act Article 14 guidance strengthens the case for meaningful human oversight where AI influences consequential outcomes.

- Approval matters, but approval plus audit trail is what makes the workflow operationally credible.

- The real trade-off is more queue friction in exchange for more control, easier adoption, and a much stronger compliance narrative.

Alyna is built around that model: it drafts, proposes, and queues; you approve; the system keeps the receipt. For the next layer down, see approval workflows for executives, security and compliance for AI executive assistants, and SOC 2, GDPR & EU AI Act: what to require from your AI executive assistant.

Alyna helps executives delegate the work, not the judgment: draft-first, approve-then-send, full audit trail. Get access.