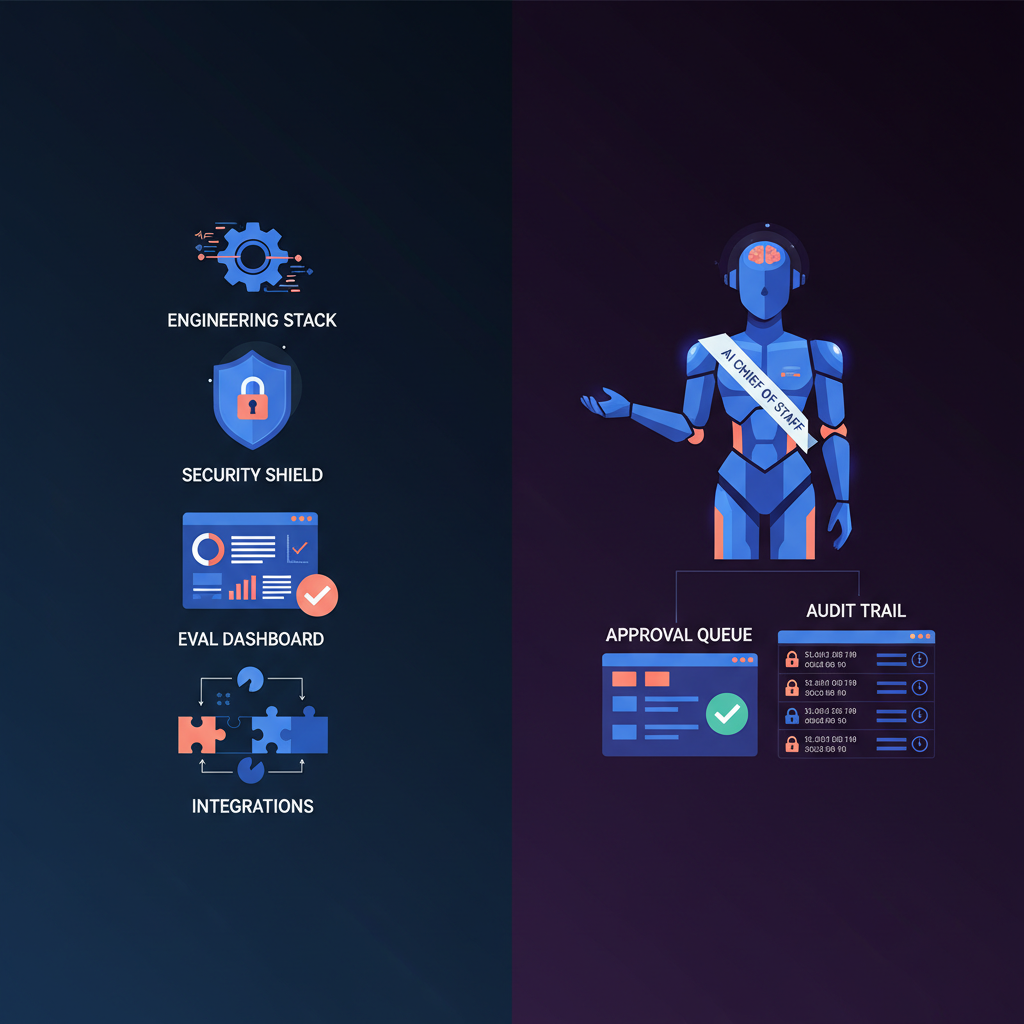

For most companies, the right answer is to buy, not build, an AI chief of staff. The hidden cost is not getting a prototype to work. It is operating a system that can safely handle executive email, calendar, briefings, stakeholder follow-up, approvals, and cross-tool context every week after launch. That means identity and permissions, evaluation harnesses, prompt-injection defenses, audit trails, retention policies, workflow tuning, and incident review, not just prompts and integrations. OpenAI recommends establishing eval baselines and risk-rating tools before production; Anthropic warns that agents trade higher performance for higher cost and compounding error risk. In other words, building a real AI chief of staff is less like shipping a chatbot and more like standing up a new operating system for executive work.

That is why this question goes deeper than the existing build vs buy AI executive assistant debate. An executive assistant use case can still be narrow. An AI chief of staff implies broader decision support, more systems access, more context, and much stronger governance requirements. If you are evaluating that category, also read security and compliance for AI executive assistants and approval workflow governance.

The phrase matters because it implies a different operating surface.

A lightweight AI assistant might help with email drafting or meeting notes. An AI chief of staff is expected to do more:

- synthesize signals across inbox, calendar, docs, chat, and the web

- prepare decision briefs, not just summaries

- draft executive communication in the right tone and context

- propose follow-ups and scheduling moves across stakeholders

- keep a persistent queue of approvals, priorities, and open loops

- remember preferences, relationships, and workflow rules over time

That broader scope creates a different cost structure.

| Capability layer | Why it is harder than it looks |

|---|

| Cross-tool context | Email, calendar, docs, chat, and browser context must be stitched into one usable model state |

| Action permissions | The system needs clear rights to read, draft, propose, and sometimes write into business systems |

| Executive communication quality | Tone, timing, and stakeholder context matter as much as factual correctness |

| Approval and audit | Outputs need review surfaces, logs, and defensible records, not just a chat transcript |

| Reliability over time | Model changes, API drift, and workflow edge cases appear after launch, not before it |

This is where teams frequently underestimate the build. They think they are building "an agent." In practice they are building:

- an integration layer,

- a permissions and governance layer,

- an evaluation layer,

- an operations layer, and

- a change-management layer for the humans using it.

The fastest way to make a bad build-vs-buy decision is to compare a polished vendor demo to an internal prototype. A fair comparison is production operating model vs production operating model.

Use this hidden cost stack instead:

| Cost bucket | What the build path must own | Why buyers underestimate it |

|---|

| Integration and identity | OAuth, service accounts, role mapping, scoped permissions, provider API changes | Demos often run with one admin account and unrealistic permissions |

| Workflow and prompt ops | Draft formats, routing logic, escalation rules, approval states, memory rules | Teams assume prompt quality is a one-time setup problem |

| Evaluation | Capability suites, regression suites, transcript review, outcome graders, release gates | Most prototypes are manually tested and feel "good enough" until scale |

| Security and compliance | Prompt-injection defenses, DLP, audit, retention, vendor review, incident response | Security work starts after stakeholder interest, not before it |

| Runtime operations | Monitoring, alerting, queue quality, model/provider changes, support load, on-call | Costs continue every week after launch |

| Adoption and governance | Training, usage policies, reviewer SLAs, exception handling, role clarity | Value dies if the human workflow is undefined |

That last point matters more than many technical buyers expect. OpenAI's 2025 enterprise AI report found structured workflows such as Projects and Custom GPTs growing 19x year to date. The lesson is not just that enterprise AI is growing. It is that value increasingly comes from repeatable workflow design, not generic model access.

If you build, you are not just buying model tokens. You are buying the responsibility for agent security.

OWASP's Top 10 for LLM Applications is a useful shorthand for what goes wrong in real systems: prompt injection, insecure output handling, sensitive information disclosure, excessive agency, and overreliance are all highly relevant to an AI chief of staff. A system that reads inboxes, proposes replies, accesses documents, and touches calendars is exposed to exactly those failure modes.

The hidden cost is not one security review. It is building and maintaining layered controls:

| Security control | Why an AI chief of staff needs it |

|---|

| Least-privilege identities | Agents should not inherit broad executive access just because it is convenient |

| Approval boundaries | External sends, scheduling changes, and sensitive updates need explicit human checkpoints |

| Prompt-injection defenses | Email threads, docs, and websites can contain malicious or manipulative instructions |

| Sensitive-data controls | Drafts and summaries can leak legal, HR, finance, or board context if not filtered |

| Audit and eDiscovery | Buyers need to reconstruct what was proposed, approved, edited, rejected, and executed |

| Retention and deletion policies | Executive data creates compliance and liability obligations over time |

The direction of enterprise security spending reinforces this. Microsoft Security's March 2026 guidance frames production agent deployments around observability, agent identity, identity governance, DLP, insider-risk controls, audit, and eDiscovery. That is not a checklist for a simple bot. It is the control plane for a new class of actor inside the enterprise.

NIST's Generative AI Profile points in the same direction by emphasizing trustworthiness considerations across the design, development, use, and evaluation lifecycle. That is a polite way of saying the security work never really ends. If you build, that lifecycle becomes your burden.

Security gets attention because it sounds serious. Evaluation gets missed because it sounds optional. In production, evaluation is usually the more persistent cost.

Anthropic's guide to evals for AI agents is the clearest explanation of why. Without evals, teams end up flying blind: they hear that the agent feels worse, fix one issue manually, and have no way to know whether something else regressed. That is especially dangerous for chief-of-staff systems, where output quality is not just factual accuracy. It is also prioritization quality, escalation discipline, stakeholder tone, approval behavior, and action correctness across many turns.

To build a credible internal system, you need at least four evaluation layers:

| Evaluation layer | What it measures | Why it is costly |

|---|

| Capability evals | Can the agent handle core workflows at all? | Requires realistic tasks, rubrics, and environments |

| Regression evals | Does it still handle previously solved tasks reliably? | Must be rerun whenever prompts, models, tools, or policies change |

| Outcome checks | Did the right thing happen in the target system? | Requires test harnesses, sandbox data, and deterministic checks where possible |

| Transcript review | Was the reasoning, tone, escalation, and tool use acceptable? | Often needs model-based graders plus periodic human calibration |

This matters because executive agents are multi-turn systems. Anthropic describes agent transcripts as full traces of outputs, tool calls, reasoning, and intermediate results, and distinguishes between capability evals and regression evals that eventually run continuously to catch drift. That is exactly the overhead teams forget to cost.

OpenAI's practical guide reaches the same conclusion from the implementation side: set up evals early, baseline performance, risk-rate tools, and use human intervention mechanisms as a safeguard while the system learns. Those are not "nice to have" additions. They are part of the minimum operating model.

If your team has not budgeted for ongoing eval design, grading, dataset refresh, and release gating, it has not really budgeted for building.

Even if you solve the first version, you still need to operate it.

Anthropic recommends starting with the simplest pattern possible because agentic systems trade latency and cost for higher task performance, and autonomous agents create potential for compounding errors. That guidance is especially relevant here: a chief-of-staff-style system encourages teams to keep adding tools, permissions, and workflow breadth. Each addition increases the maintenance surface.

The ongoing ops burden usually includes:

- investigating weird outputs and escalations

- tuning prompts, routing, memory, and approval rules

- handling OAuth and API changes from Google, Microsoft, Slack, or calendar providers

- monitoring queue health, latency, and cost per workflow

- reviewing incidents where the agent drafted, escalated, or prioritized incorrectly

- re-testing when the model, provider, or system prompt changes

- supporting users who want exceptions, delegation, or new workflow categories

This is where build economics get distorted. The prototype is often funded as innovation work. The real system becomes an operational product with no clear owner. That gap is one reason so many AI projects stall between demo and scaled deployment.

The human workflow is also part of operations. If an executive, EA, or chief of staff is supposed to clear approvals daily, someone has to define the SLA, escalation path, and exception policy. That is why pages like approval workflows for executives matter as much as architecture diagrams. The product is only useful if the organization can actually operate it.

Most teams should not force a binary answer. There are really three viable paths.

| Path | Best when | Hidden downside |

|---|

| Build | AI chief of staff capability is strategic IP, you need highly custom workflows, and you can fund security, evals, and ops as a product | Slow time-to-value, high ongoing ownership burden |

| Buy | You need production value fast and the capability is operational leverage, not your product moat | Less architectural control and some vendor dependency |

| Hybrid | You want a production-ready core with approval, audit, and security, then add internal workflows around it | Integration complexity can still creep back in |

My recommendation for most executive teams is:

- Buy if the primary goal is operational leverage for leaders.

- Build only if the AI chief of staff is itself a differentiated product or core platform capability.

- Use hybrid if you need custom workflow logic but do not want to own the full stack from identity to audit trail.

That is also the lens for Alyna's AI Chief of Staff. The "buy" value is not just faster deployment. It is avoiding the need to build the approval, audit, governance, and operating model yourself.

There are cases where building is the right call.

Build can make sense when:

- the AI chief of staff capability is part of your product differentiation

- you need deep proprietary workflow logic or domain constraints a vendor cannot support

- your security, residency, or deployment requirements cannot be met by off-the-shelf software

- you already have a team that can own identity, evaluation, security, and workflow operations long term

- you want to own the data model, agent harness, and release process as strategic assets

But the bar should be high. The real threshold is not "we have engineers." It is "we are willing to operate this as a governed product for years."

Buying is not always the best answer either.

You may not want to buy if:

- your workflows are uniquely regulated or require highly specific data boundaries

- the product needs to sit inside a proprietary environment with custom tools and policies

- you cannot accept vendor dependency for a mission-critical layer

- your organization needs unusual evaluation logic or industry-specific behavior that no platform supports well

There is also a softer limitation: buying can create false confidence if the team skips internal governance just because the vendor is strong. Even the best platform still needs clear approval rules, reviewer ownership, workflow scope, and a rollout plan. If you buy, pair it with explicit internal decision-rights policy.

The biggest hidden cost is not the initial engineering sprint. It is the ongoing operating model: evaluations, security controls, identity governance, workflow tuning, incident review, and support for humans using the system every day.

A chief-of-staff system has broader context, more permissions, more decision support, and more governance requirements. It has to connect executive communication, coordination, prioritization, approvals, and auditability across multiple business systems, not just answer prompts or draft isolated text.

Yes. For many teams, the best path is to buy a governed, production-ready core and then add custom internal workflows or integrations around it. That preserves speed while avoiding the burden of owning every security and evaluation control from scratch.

Build when the capability is strategic IP, the workflow requirements are genuinely unique, and the team can fund the full lifecycle: architecture, security, evaluation, release management, and day-two operations. If those conditions are not true, buying is usually the cleaner decision.

The build-vs-buy decision for an AI chief of staff should be made on lifecycle ownership, not prototype excitement. A real production system needs cross-tool context, approval flows, observability, security controls, evaluation infrastructure, and human operating rules. That is why the hidden costs are mostly post-launch costs. For most companies, buying is not just faster. It is the more realistic way to get a governed system into executive workflows without creating a shadow AI product team inside the company.

If your organization simply wants the capability, not the burden, compare the broader strategic logic here with the narrower executive-assistant build-vs-buy debate, then evaluate whether a production-ready option like Alyna already gives you the approval, audit, and operating model you would otherwise have to build yourself.

Alyna is an AI Chief of Staff built around executive approvals, auditability, and operational control, so you can buy the capability without inheriting the full agent platform burden. Get access.