Personal AI workers are no longer just a concept demo. They are becoming a real operating layer for knowledge work: systems that can interpret goals, use tools, move across apps, and finish multi-step tasks on your behalf. This page is intentionally not a general personal-AI buyer guide. It is a bounded-autonomy framework for executives trying to decide how far a worker should be allowed to go before a human must step in. The shift is visible in both product design and enterprise demand. Microsoft's 2025 Work Trend Index says every employee is moving toward an "agent boss" role, while OpenAI's 2025 enterprise report shows AI usage moving from casual chat to structured, repeatable workflows. And according to Nylas's 2026 State of Agentic AI research, 67% of teams are already building or shipping custom agentic workflows.

That creates a new practical question for executives: when does a personal AI worker stop being an assistant and start being an operator?

My view: the category becomes useful only when it is built around bounded autonomy. Let the worker read, search, synthesize, and draft aggressively. Require explicit approval for consequential outward actions. Keep a clear audit trail. That is how you get leverage without losing control.

If you want the adjacent operating model, also read always-on vs on-demand AI assistant, workflow automation for executives, and approval workflow governance.

A personal AI worker is not just a chatbot with a nicer UI.

OpenAI's practical guide to building agents defines agents as systems that independently accomplish tasks on a user's behalf. Anthropic's guide to building effective agents makes a useful distinction between:

- workflows, where the system follows predefined code paths

- agents, where the model dynamically directs its own process and tool use

That distinction helps clarify what "worker" should mean in practice:

| System type | What it does | Example |

|---|

| Assistant | Responds to a prompt, then stops | "Draft this email" |

| Workflow | Follows a fixed sequence | "When a meeting ends, summarize notes and create tasks" |

| Personal AI worker | Interprets a goal, chooses steps, uses tools, and adjusts mid-task | "Prepare tomorrow's board brief, draft follow-ups, propose schedule changes, and wait for approval before sending" |

The worker model matters because modern executive work is not one-step work. It is cross-app, cross-context, and interruption-heavy. Email affects calendar. Calendar affects follow-ups. Follow-ups affect customer, candidate, and investor expectations. A useful worker has to operate across that chain.

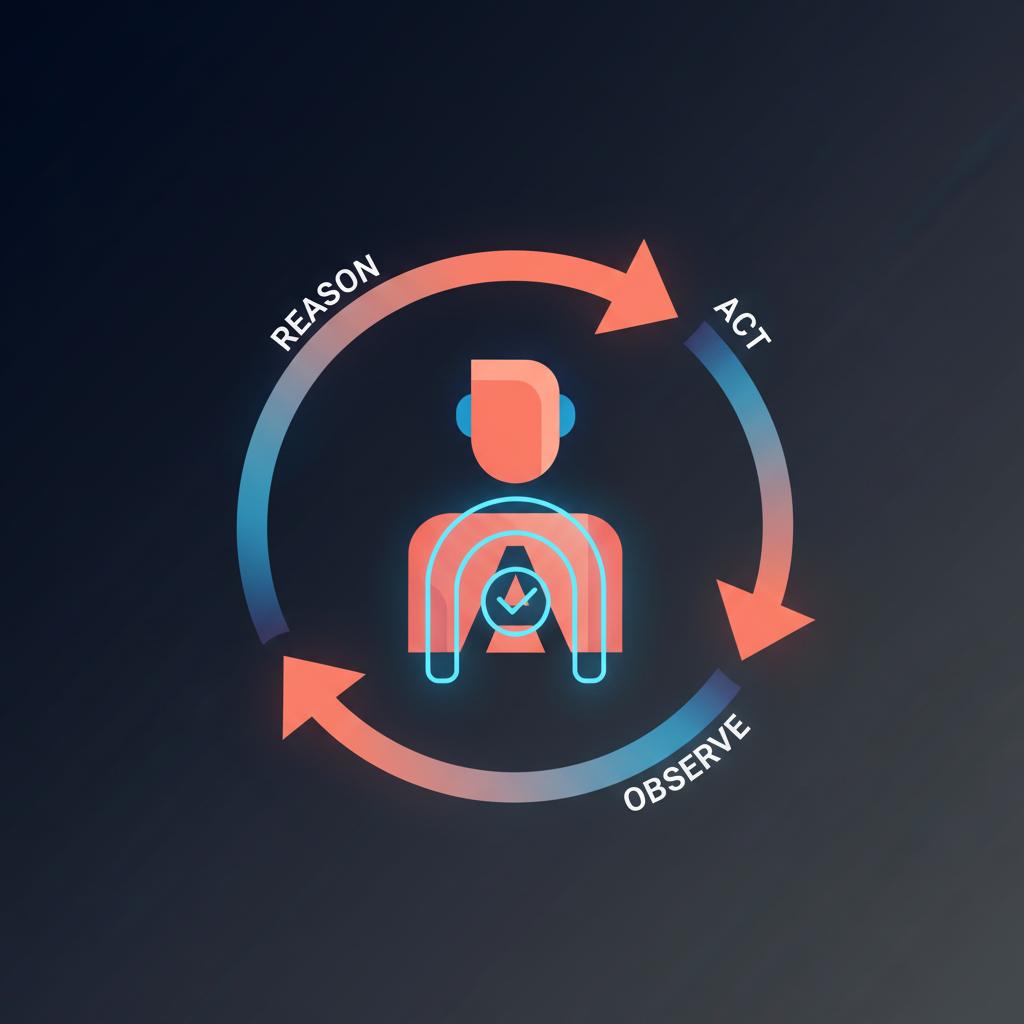

Most personal AI workers follow a loop that looks something like this:

- Understand the goal.

- Plan the next steps.

- Use tools to gather context or take actions.

- Observe results and adjust.

- Escalate, pause, or ask for approval when needed.

That pattern shows up across both vendor guidance and production behavior. OpenAI describes agents as using models, tools, and instructions inside a run loop, with explicit guardrails and clear exit conditions. Anthropic describes agents as operating independently, getting ground truth from the environment at each step, and pausing for human feedback at checkpoints or blockers.

The operator-level point is simple: the worker is not valuable because it thinks. It is valuable because it can keep a task moving across steps without needing you to re-prompt every transition.

Examples:

- read the inbox, identify the three emails that matter, draft replies, and queue them

- turn today's meetings into action items, owners, due dates, and proposed follow-ups

- research a prospect, update the brief, and suggest next meeting times

- compile travel constraints, compare options, and hold the booking for approval

This is why the phrase "personal AI worker" is useful. It implies labor, not just language.

Three separate trends are converging.

OpenAI reports that structured workflows such as Projects and Custom GPTs increased 19x year-to-date in 2025, while workers report saving 40 to 60 minutes per day and heavy users report more than 10 hours per week. That is not just novelty usage. It is workflow usage.

Microsoft's 2025 Work Trend Index argues that companies are moving toward "human-agent teams," says 82% of leaders expect to use digital labor in the next 12 to 18 months, and introduces the idea that every worker becomes an "agent boss." Whether or not you like the branding, the operating point is right: people will increasingly direct AI workers instead of doing every step themselves.

Nylas reports that 67% of teams are already building or shipping custom agentic workflows, and that trust, control, and failure handling remain the main constraints. In other words, adoption is real, but so is the design problem.

Approval is not a philosophical preference. It is an operating control.

The right mental model is:

A personal AI worker should be highly autonomous in preparation and tightly controlled in execution.

That means the worker can often do these steps without bothering you:

- search

- retrieve documents

- summarize threads

- cluster action items

- draft messages

- propose schedules

- compare options

But it should pause before:

- sending an email

- booking or changing travel

- confirming a meeting

- messaging a candidate

- updating a customer-facing record

- making a financial or contractual commitment

This is not theoretical. OpenAI's Agents SDK human-in-the-loop guide literally documents an approval flow where a tool call can require approval, the run pauses, and execution resumes only after approve or reject decisions are recorded. That is what production-grade control looks like: pause, inspect, approve, resume.

Approval without evidence is weak. If the system says "approved" but you cannot reconstruct what was proposed, what context the approver saw, what changed before execution, and what actually happened, you still have a governance gap.

A credible personal AI worker should maintain an audit trail with at least:

| Audit element | Why it matters |

|---|

| Proposed action | Shows what the worker intended to do |

| Context shown to the approver | Proves the approval was informed |

| Approver identity | Establishes accountability |

| Timestamp | Supports review and incident reconstruction |

| Final executed content | Prevents ambiguity about what actually went out |

| Result or status | Confirms whether the action was approved, edited, rejected, or executed |

That logic lines up with broader governance guidance. OpenAI's paper on governing agentic AI systems emphasizes constraining action space, requiring approval, making agent activity legible, and maintaining interruptibility. The EU AI Act's Article 14 on human oversight says oversight should let people understand limitations, monitor operation, override outputs, and intervene or stop the system. NIST's AI Risk Management Framework likewise centers governance and lifecycle accountability.

Even if your use case is not legally classified as high-risk AI, those are still the right product instincts.

This is where many articles get sloppy. They either argue for full autonomy or for blanket approval on every single step. Production systems usually need a more nuanced policy.

| Task type | Example | Default control |

|---|

| Read-only, reversible | Search inbox, summarize notes, extract tasks | Can run autonomously |

| Preparatory but user-visible | Draft email, propose time slots, create meeting brief | Can run autonomously, but must be reviewable |

| External and reversible with low downside | Save a draft, create an internal ticket, propose but not confirm | Often safe with lightweight approval |

| External and commitment-creating | Send email, confirm meeting, submit outreach, book travel | Explicit approval |

| High-stakes or hard-to-reverse | Legal, financial, personnel, compliance-sensitive actions | Explicit approval plus clear audit trail and easy interruption |

This is the right middle ground. It preserves speed on the information and drafting layers while keeping consequential acts under human control.

Approval-first design is not free. It adds queue friction. Someone still has to clear the final action. And as task volume grows, the review queue becomes a product problem of its own.

But defensibility matters more as stakes rise.

Anthropic's February 2026 research on agent autonomy in practice is useful here. It found that experienced users often auto-approve more actions over time, but they also interrupt more often when needed. That is a strong reminder that good oversight is not always "approve every micro-step." In software environments, experienced users may prefer monitoring plus intervention.

Executive workflows are different.

Why? Because software tasks are often testable before release. Executive actions are often social, external, and irreversible. A misrouted email, a badly timed candidate follow-up, or a mistaken calendar commitment is not just a bug. It is a relationship event. That is why approval-first is especially strong for executive support even if other domains move toward lighter-touch supervision.

If you are evaluating a personal AI worker, these are the common ways things break:

The product says it is "human in the loop," but in practice it auto-sends or auto-books after a vague confidence threshold. That is not approval-first. That is permission with branding.

If the worker cannot show what it intends to do, what it already did, and why it is stuck, oversight becomes guesswork.

An agent with broad mailbox, calendar, browser, and CRM access but vague channel rules has a wider blast radius than most teams realize.

If Legal, Security, or Ops asks what happened last Tuesday, you should not need to reconstruct the answer from app logs and memory.

If you want a personal AI worker that is actually usable in executive work, require:

- clear permission scopes by tool and action type

- explicit approval for external actions

- editable approvals, not just approve-or-reject buttons

- a single review queue instead of fragmented approvals across apps

- full audit history with proposal, approver, timestamps, and final output

- visibility into the plan and recent steps

- easy interruption or stop controls

- clear boundaries for what can run automatically

If a product is vague on any of those, it is probably optimized for demo velocity more than real-world trust.

Alyna should not be positioned as "an AI that runs your life without you." That framing sounds futuristic and breaks trust at exactly the moment executives need control.

The stronger framing is:

- a personal AI worker for the preparation layer

- a chief-of-staff operating model for the execution layer

- approval-first for outbound actions

- audit trail for accountability

That combination is more credible, more defensible, and more useful than broad autonomy marketed as magic.

- Personal AI workers are agentic systems that can pursue multi-step goals across tools, not just answer prompts.

- The category is growing because enterprise AI is moving from casual chat to structured workflows, and human-agent teams are becoming a real operating model.

- The right design is bounded autonomy: let the worker read, search, synthesize, and draft aggressively; require approval before consequential external action.

- Approval plus audit trail is what makes a worker scalable in executive contexts. Without both, "the agent did it" is not an acceptable operating answer.

- The right question is not whether an AI worker can act. It is which actions it should be allowed to take without you.

Alyna is built for that boundary: multi-step work, approval-first execution, and executive control at the final action layer. If you want the adjacent frameworks, continue with approval workflows for executives, why approval-first AI assistants win, and always-on vs on-demand AI assistant.

Alyna: your personal AI worker - draft-first, you approve, full audit trail. Get access.